How Docker changed my life as a developer? To be honest – completely!

Before I start to explain why and how, let’s ask the obvious question – What is Docker? It is the world’s leading containerization platform, and it’s vision is “Build, Ship, and Run Any App, Anywhere” by enabling independency between application and infrastructure.

Beside the obvious and well known benefits of containerization

- continuous delivery

- easy continuous integration

- development and unit testing in production likely environment

- one package for all stages

- easy to manage with orchestration systems like Docker Swarm, Kubernetes, Amazon Elastic Compute Cloud (EC2)

it affected my daily workflow profoundly.

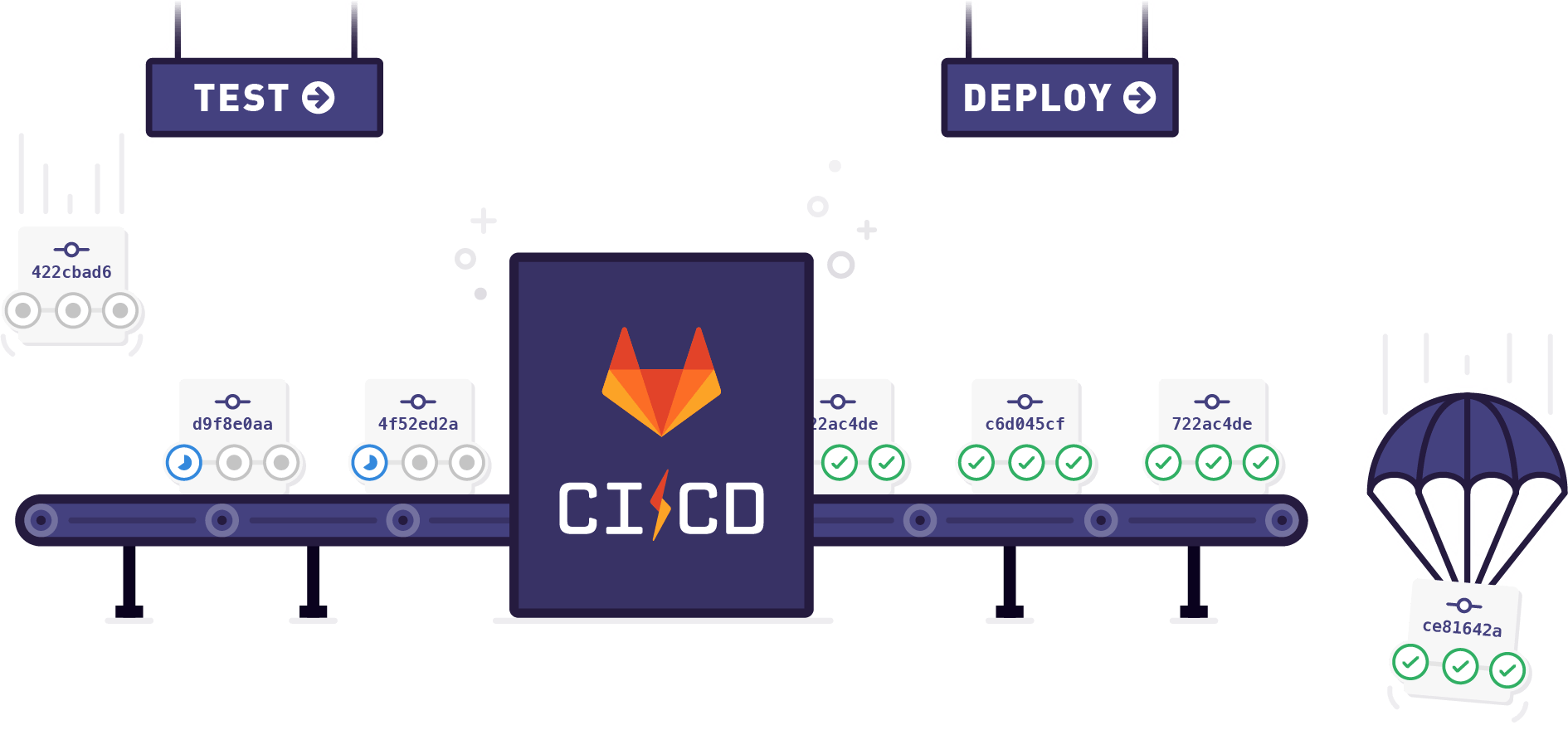

If you include the new concepts for cloud development like The Twelve-Factor App and continuous integration / continuous delivery (CI/CD) in your current software development, it makes your life so much easier. Both version rollbacks and software updates become really easy. In some cases it is even easier to rollback than to fix the problem with a new release.

Docker simplifies managing different development environments

Beside the mentioned advantages of containerized deployments and development, Docker also changed my daily work. Prepared Docker containers with preinstalled software like databases, Linux, command line interface (CLI) tools for Amazon Web Services (AWS), CloudFoundry, Kubernetes (K8s) or just am application servers like Tomcat (incl. Java) simplify managing different development environments concurrently on my notebook. Notebooks are really high-performance machines today and I can use this performance during my work without having several 40GB VMs locally stored or having them hosted on our servers – often with less memory or CPU power than my notebook could provide anyway. All these containers are running with a minimum of overhead and I am able to use all the resources for my actual work.

The system inherent capabilities make it easy to start Docker containers with defined test data (e.g. a database with a set of entries or test documents) for each test run of my unit tests. The quick launch time and initialization techniques make it possible to start a container within a few seconds. For a client project that was designed as a MicroService infrastructure, I was able to set up the CI tools (Gitlab CI) to run integration tests automatically by starting all microservices during the build steps to run some API tests against each other. This build step checks if for changes in the defined APIs. An API first approach should help to avoid such errors but to run such tests automatically brings one more quality gate to our project.

Docker containers with pre-installed software like command line interface (CLI) management tools lets me encapsulate the required environment within the docker runtime without any impact on other environments or my notebook installation itself. I just start the Docker container with “docker start ” and connect via ssh and my terminal window is in a completely new environment where the K8s CLI kubectl is installed. The environment is prepared to connect to my cluster and I can directly start working. Also other useful tools like the swagger-editor can be launched locally with a Docker container – especially if you are working in a client project where it is not allowed to use the cloud hosted Version, this is very helpful.

Tools like docker compose make it easy to launch larger environments with several containers to run some integration tests.

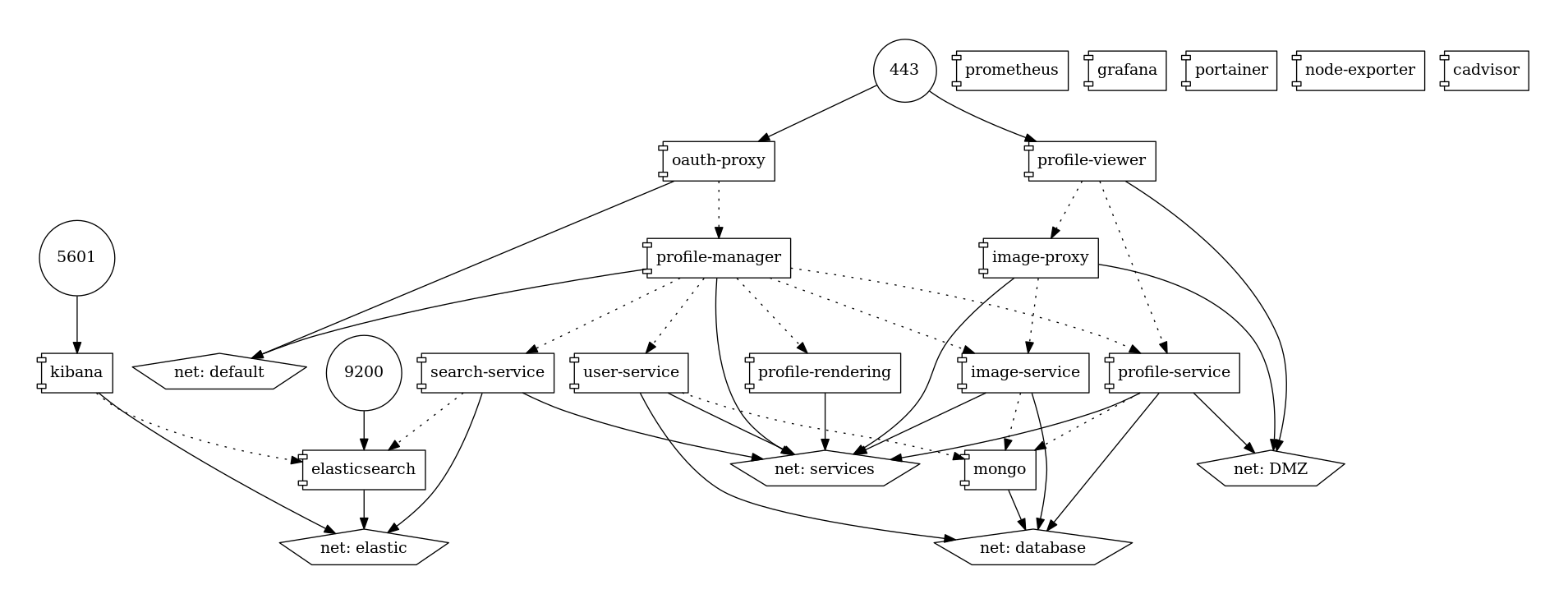

I was able to work with Docker containers in several client projects as well as in internal projects like the fme Contract Management or the fme EmployeeProfileDB. The fme EmployeeProfileDB is a web application for our Consultants. It allows them to create and maintain their personal employee profiles that we use in our sales process. Instead of filling out Word templates, the users just enter their data and the app renders both DOCX and PDF versions ad-hoc using the up-to-date template. Although this app consists of 16 Docker Containers (which include some logging and monitoring tools), it is very easy to deploy and maint. Prebuilt containers like LibreOffice and oAuth2 proxy saved a lot of time during the development phase.

We are using technologies like Docker, Kubernetes or Pivotal Cloud Foundry internally or in client projects nearly every day. This helps us to set up infrastructures on IaaS systems and build up pipelines for CI/CD to automate operational activities like updates, backups and restore/recovery for our clients.

My conclusion after two years of working with Docker: I love it. It makes my life so much easier and the days where my 160 GB SSD hard drive was permanently full and I had to wait until the VM was copied and had started are gone.

Read more:

Landingpage: Container Technology Meets OpenText Documentum

Blog: OpenText Documentum on the AWS platform

0 Comments