In this article, a solution is presented which allows to automatically collect billing information from various accounts to present them in a concise format in one or more AWS Quicksight dashboards. This application can run in its own AWS account so that access can be limited on account level. Only dedicated persons can be authorized to learn about the costs.

AWS Cost Management Platform and Consolidated Billing

AWS provides a complete toolset consisting of AWS Billing, Cost Explorer, and other sub services. They offer functionalities to get a focused overview about costs and usages as well as tools to drill down into the details of most services. The tooling is sufficient to get the job done when working with one AWS account even though the user experience might not be as awesome as provided by other more dedicated and focused 3rd party tools. Special IAM permissions are needed to access all this information to tackle the governance part as well.

AWS Organization which rules all AWS accounts associated with it provides some extended functionalities (aka. Consolidated billing) to centralize cost monitoring and management. However, in most companies and large enterprises only very few people are allowed to access the billing account. This does not help a project lead to get the required information easily. Depending on the number of AWS accounts used by team, someone who is allowed has either to login into every account regularly and check the costs or rely on “Cost and Usage” reports which can be exported automatically to S3. These reports are very detailed (maybe too much for simple use cases) and require some custom tooling to extract the required information.

AWS has published a solution called Cloud Intelligence Dashboards – a collection of Quicksight dashboards which are among other data sources based on these cost and usage reports. Beside this one, company internal cost control tools – sometimes bases on the same AWS services – exist and can be “rented”. All these solutions have their advantages and use cases – but also drawbacks (mostly related to their costs and sometimes also due to overly large IAM permission requirements).

An approach for simple use cases

Sometimes it is fully sufficient to present some information in a concise manner to stay informed about the general trends and total numbers. In case something reveals itself to be strange or not to be in the expected range, a more detailed analysis can be performed using for instance the AWS tooling mentioned above.

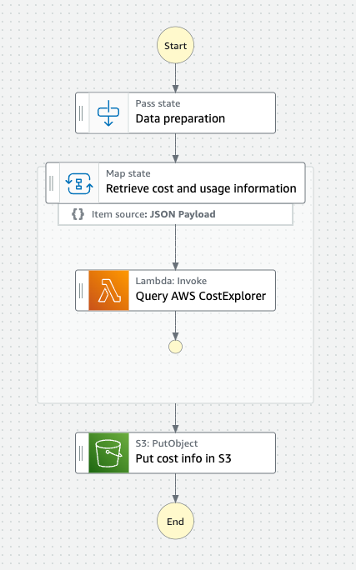

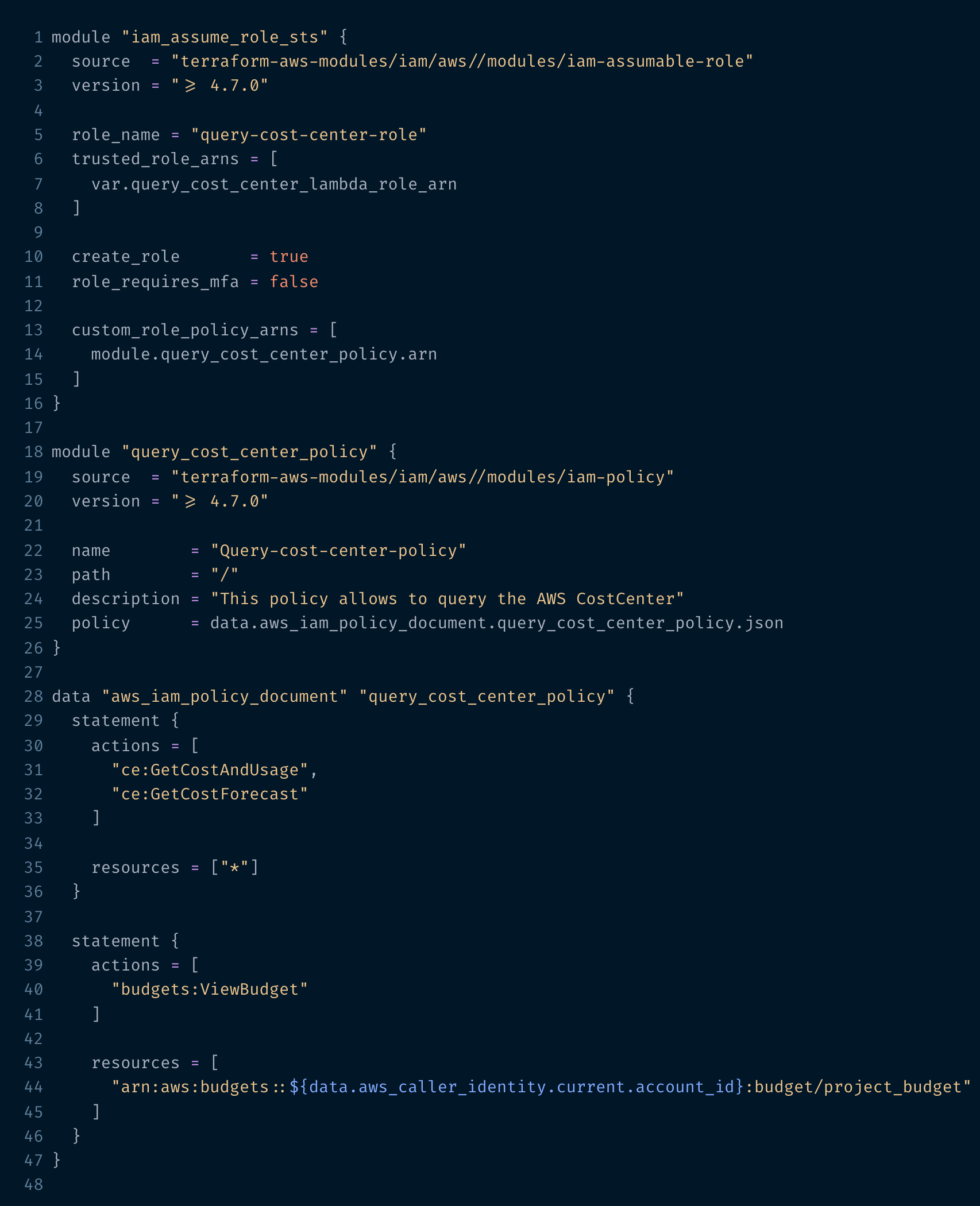

Following this idea, Step Functions, Lambda, S3 and Quicksight power a small application which retrieves cost related data for every designated AWS account and stores it as a JSON file in S3. Quicksight which supports S3 as a data source directly reads this data and provides it to build one or more dashboards displaying various cost related diagrams and tables. This workflow (which is shown below) is triggered by an EventBridge rule regularly (e.g., once a day) so that up-to-date information is available.

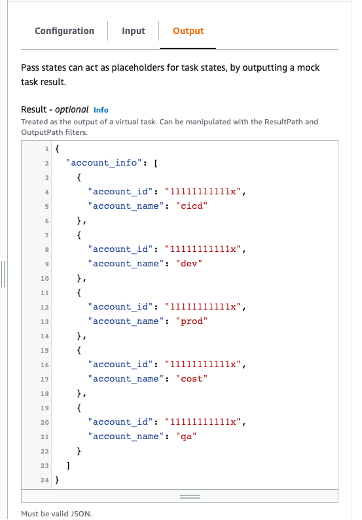

1. The „Data preparation“ step provides the AWS account ids and names as input for the following Map State.

Input data for Map State

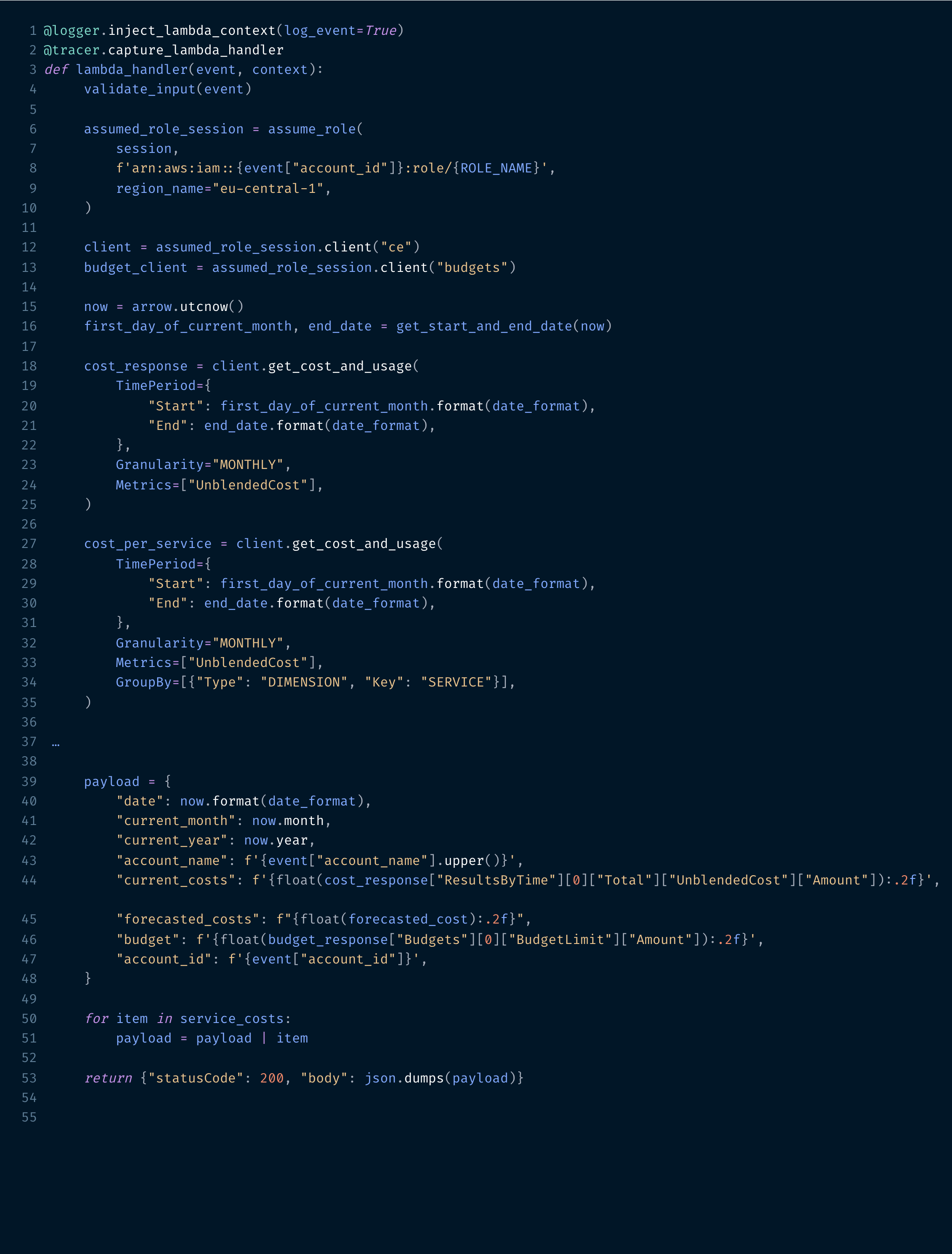

2. The Map State starts for every array element (= AWS account) an instance of a Lambda function which assumes an IAM role in the relevant account and queries the AWS Cost Center and AWS Budget APIs to collect the required information: total current costs, costs per service, cost forecasts…

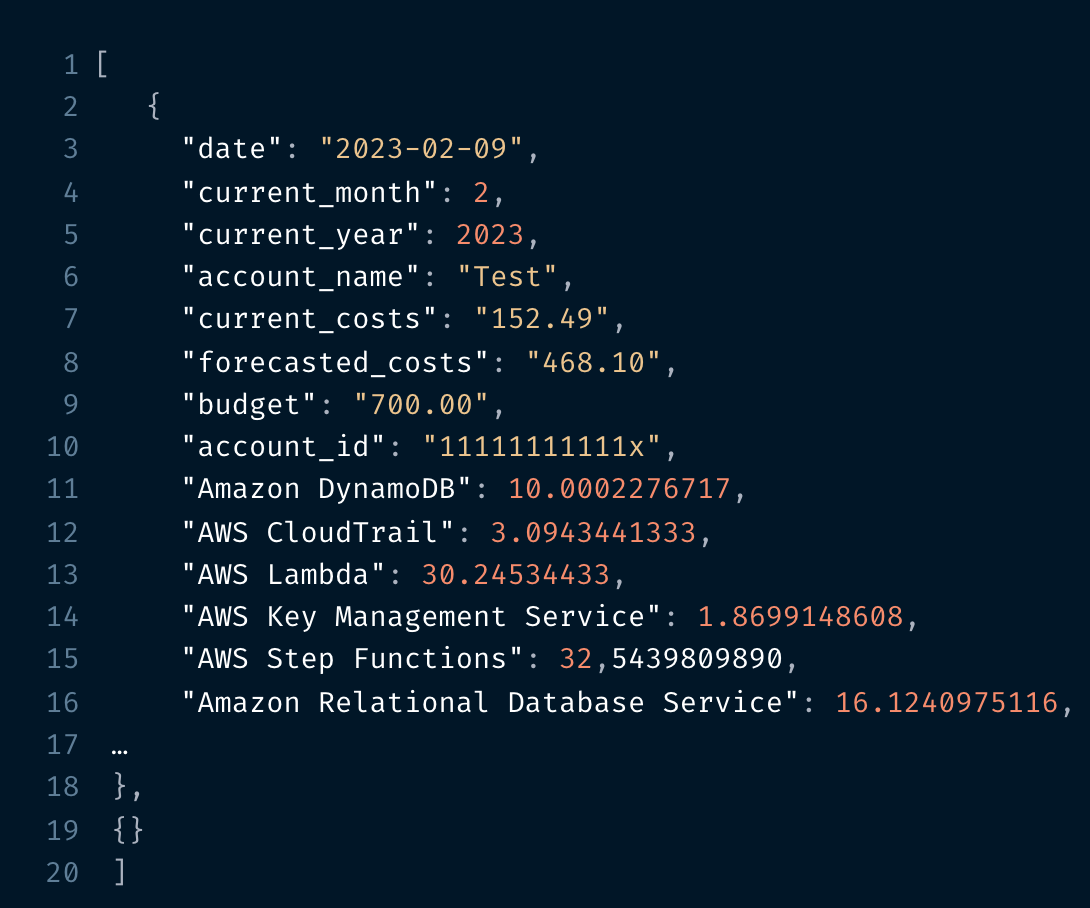

The outcome of the Map State is an array consisting of the cost data retrieved from every AWS account.

3. This data is stored as JSON file in a S3 bucket in the last workflow step. Every file name contains the current date to make them unique.

Apart from the StepFunctions workflow and the Lambda function which can for instance run in a dedicated AWS account to simplify the permission management, an IAM role needs to be deployed to every account whose costs should be retrieved. This role must trust the Lambda function and contain the necessary permissions to query AWS Cost Center and AWS Budget. An example written in Terraform is given below.

Data visualization with Quicksight

Quicksight supports S3 as a direct data source – only a manifest file containing a description of the data stored in the bucket is needed. This is quite handy for data whose structure does not change or only very seldom.

A more involving setup including AWS Glue and AWS Athena might be beneficial in cases where either a lot of details (not only basic cost information) are queried or a lot of different AWS services are used over time in the different AWS accounts. It might happen that Quicksight runs into problems when trying to load this kind of data as it is going to change constantly, and the manifest file requires a lot of updates. A Glue Crawler combined with an Athena table might be the better approach in such a scenario.

As soon as a new dataset based on the S3 bucket has been created, one or several dashboards can be implemented. They can represent some overview data like it is done in the example below or go into more detail – depending on the specific requirements. How to create these dashboards is out of scope of this article but Quicksight offers enough tooling to start from simple to go a long way to sophisticated information display.

A Quicksight dashboard can either be shared with individuals in need of this information or a scheduled email notification can be established. Quicksight will send a mail to all specified recipients which can include an image of the dashboard as well as some data in CSV format. This feature helps a lot, it is not always necessary to login to keep the costs under control. Simply by receiving an automated message, for example every day or just once a week can already help to stay informed.

Wrapping up

Cost monitoring is an important topic for every project – from small to large. AWS offers various tools to stay up to date, but this task is getting tedious when following AWS best practice and separating an application into different stages and AWS accounts. There are 3rd party or company-internal tools available which helps to overcome this situation, but it is not always possible to use them (especially in an enterprise setup) or they come with their own drawbacks.

This blog post has presented a small-scale application which offers enough information and details to monitor the costs generated by small to medium size projects. It has its own limitations as it might not be powerful enough when dealing with tens or even hundreds of AWS accounts – but this is normally not the typical setup of a project.

Photo credits

Photo of Anna Nekrashevich: https://www.pexels.com/de-de/foto/lupe-oben-auf-dem-dokument-6801648/

0 Comments